The Macro: Everyone’s Shipping AI Agents, Almost Nobody Is Shipping Good Ones

Here’s the thing about AI coding agents in 2025: everyone has one. GitHub Copilot, Cursor, Devin, Claude Code, the list keeps growing. The agent wars are basically settled at the infrastructure layer, and now the messy question is what you actually do with all this automated code generation once the novelty wears off.

The answer, if you’ve spent more than a few weeks trying to ship production software with any of these tools, is: you spend a lot of time fixing the output. Hallucinated APIs. Context that drifts mid-session. Business logic that’s technically syntactically correct and completely wrong. The benchmark numbers look great. The pull requests look less great.

The software engineering job market has contracted roughly 22% since January 2022, according to Pragmatic Engineer’s 2025 state-of-the-market piece, and AI tooling is a significant part of that story. Companies are betting heavily that agents can absorb a meaningful chunk of what junior engineers used to do. That bet only pays off if the agents are actually reliable, not just fast.

This is where things get interesting. The problem isn’t really the models. It’s that nobody has a clean way to define, test, and share what good agent behavior looks like for a specific codebase or domain. AWS has Kiro. There’s the BMAD method floating around. People are duct-taping together system prompts and praying. The code review layer is starting to address some of this, but upstream, at the skill definition level, it’s still pretty chaotic.

Tessl is trying to own that upstream layer.

The Micro: A Package Manager for How Your Agent Actually Thinks

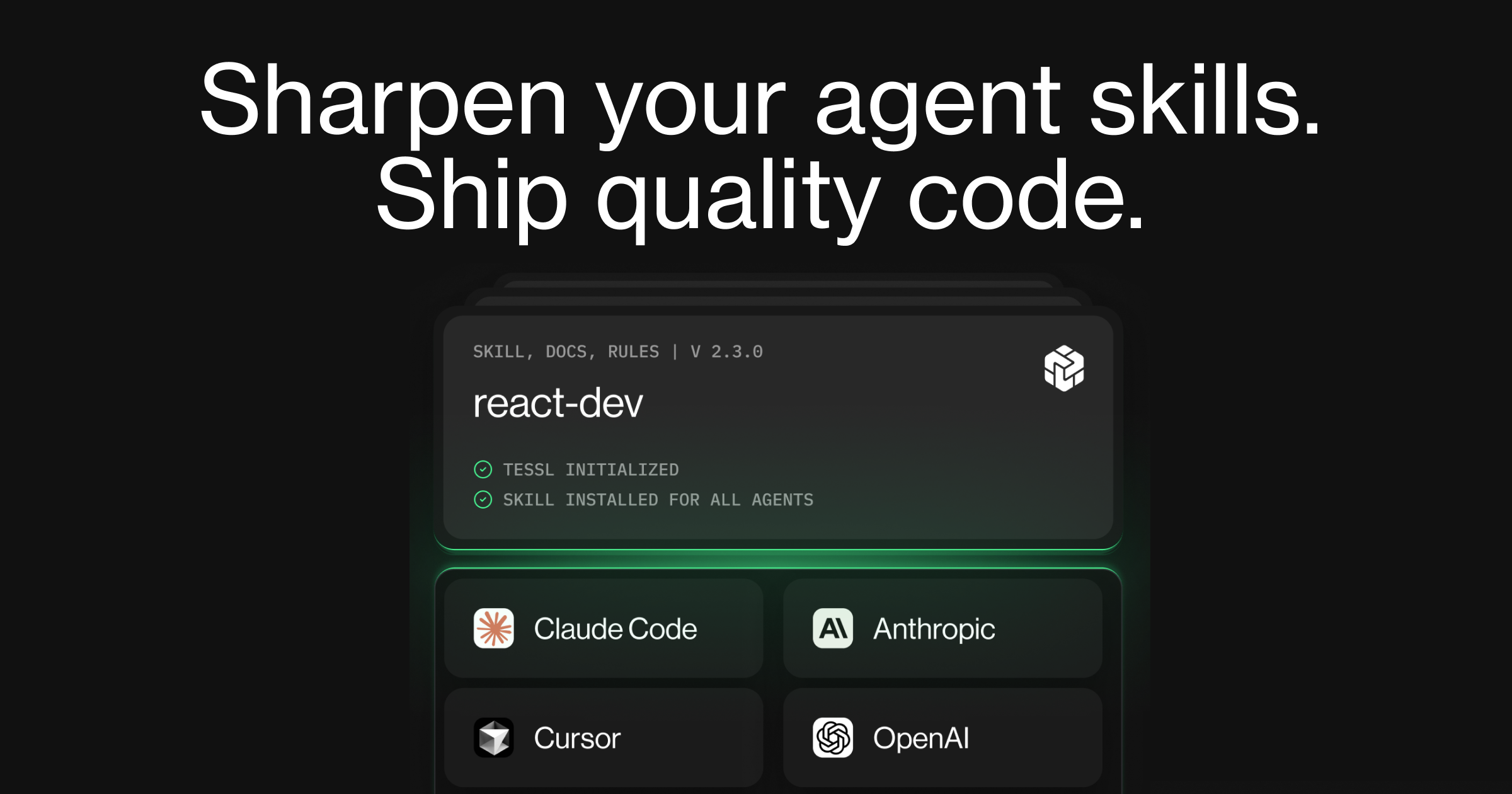

The core concept behind Tessl is something called agent skills. The pitch is that instead of vibe-prompting your AI agent and hoping for the best, you define explicit, testable skills that shape how it behaves. Think of it less like a prompt library and more like a package manager for agent cognition, which is either a very cool abstraction or a marketing layer on top of structured prompting depending on your level of cynicism.

The product lets developers submit skills to a public registry (no signup required, which is a genuinely smart friction-reduction move), evaluate how well those skills perform, and optimize them before baking them into a workflow. The public registry angle is important. It’s the same instinct that made npm and PyPI so sticky: make sharing the default, and let the community do the curation work over time.

From what I can piece together, the skills themselves are composable and installable via a CLI-style interface. There’s a tessl install command pattern visible in their own published skill pages. So the developer experience is intentionally familiar. If you’ve used any package manager in the last decade, the mental model transfers.

It got solid traction on launch day, which makes sense given the founder’s profile.

Guy Podjarny previously founded Snyk, which is now worth several billion dollars and basically taught a generation of developers to treat security as a first-class engineering concern rather than something you bolt on at the end. According to Crunchbase, he’s CEO at Tessl. According to a LinkedIn post from Armon Dadgar (HashiCorp co-founder), Tessl raised $125 million. That’s a real number for a company still in early product motion, and it signals that serious people believe this category is real.

The comparison to how Claude handles persistent memory and context is worth making here. A lot of the agent reliability problem is fundamentally about context management. Tessl seems to be attacking it from the definition side rather than the memory side, which is a different and potentially more durable approach.

The Verdict

I want this to work. The problem it’s pointing at is real and under-addressed, and the abstraction of a skills registry is the kind of thing that, if it gets adoption, could become genuinely load-bearing infrastructure for how teams work with agents.

But I have questions.

The public registry only matters if people actually publish good skills, and good skills only get published if the evaluation framework is rigorous enough that developers trust the signal. That’s a hard cold-start problem, and $125 million buys you runway but not community.

I’d also want to know how opinionated the skill schema is. If it’s too loose, you end up with a chaotic grab-bag that nobody can trust. Too rigid and adoption stalls because every team’s codebase has different needs. Getting that balance right is genuinely hard product work, the kind that doesn’t show up in a launch announcement.

At 30 days, the number I’d watch is return usage, not installs. At 60 days, whether any meaningful open-source projects adopt it as a standard. At 90 days, whether enterprise teams are treating the registry as something they actually depend on.

Guy built Snyk into a category. He might do it again. But the skills registry has to become the place developers actually go, not just the place they visit once.

Also featured on HUGE: AI Code Review That Actually Read the Room (and Your Slack History) · Ellie Lives in Slack So You Don’t Have to Live in Jira · PinMe Wants You to Deploy a Website Before You Even Remember to Make an Account