The Macro: The Bots Are Already Here and Your Dashboard Has No Idea

Somewhere in your analytics dashboard right now, there is a growing pile of traffic you are almost certainly not thinking about correctly. It looks like weird crawlers. Maybe some suspicious referrals. You’ve been ignoring it.

That traffic is AI agents. ChatGPT pulling context for a user query. Perplexity building a cited answer. Claude reading your docs to answer a question someone typed at 11pm. The agentic web, which is a slightly sci-fi way of saying “AI systems that browse things autonomously,” is already here and it’s growing fast. Most web analytics tools were designed in an era when traffic meant a human eyeball and a session. That assumption is quietly breaking.

This matters for a pretty concrete reason. If an AI answer engine is reading your site to construct answers about your product category, your SEO strategy is now partially a content strategy for machines. That’s genuinely different from what we understood SEO to be even two years ago. I’ve written before about how much of what we think we know about web traffic is based on increasingly bad assumptions, and the AI crawler problem is the most acute version of that right now.

The analytics market is enormous and still growing fast. Multiple research firms peg the data analytics space at somewhere between $70 billion and $82 billion in 2025, with projections across the board pointing to continued double-digit growth through the decade. The specific slice Siteline is going after, understanding AI-driven traffic and its downstream effects on human acquisition, is new enough that there’s no clean incumbent. Microsoft Clarity recently added some bot activity visibility according to a LinkedIn post from analyst Riccardo Gosteli, but that’s a feature, not a product. Google Analytics treats AI crawlers as noise to be filtered. Nobody is treating this as signal worth building a whole product around. Until now, anyway.

The Micro: Google Analytics for the AI That’s Already Visiting Your Site

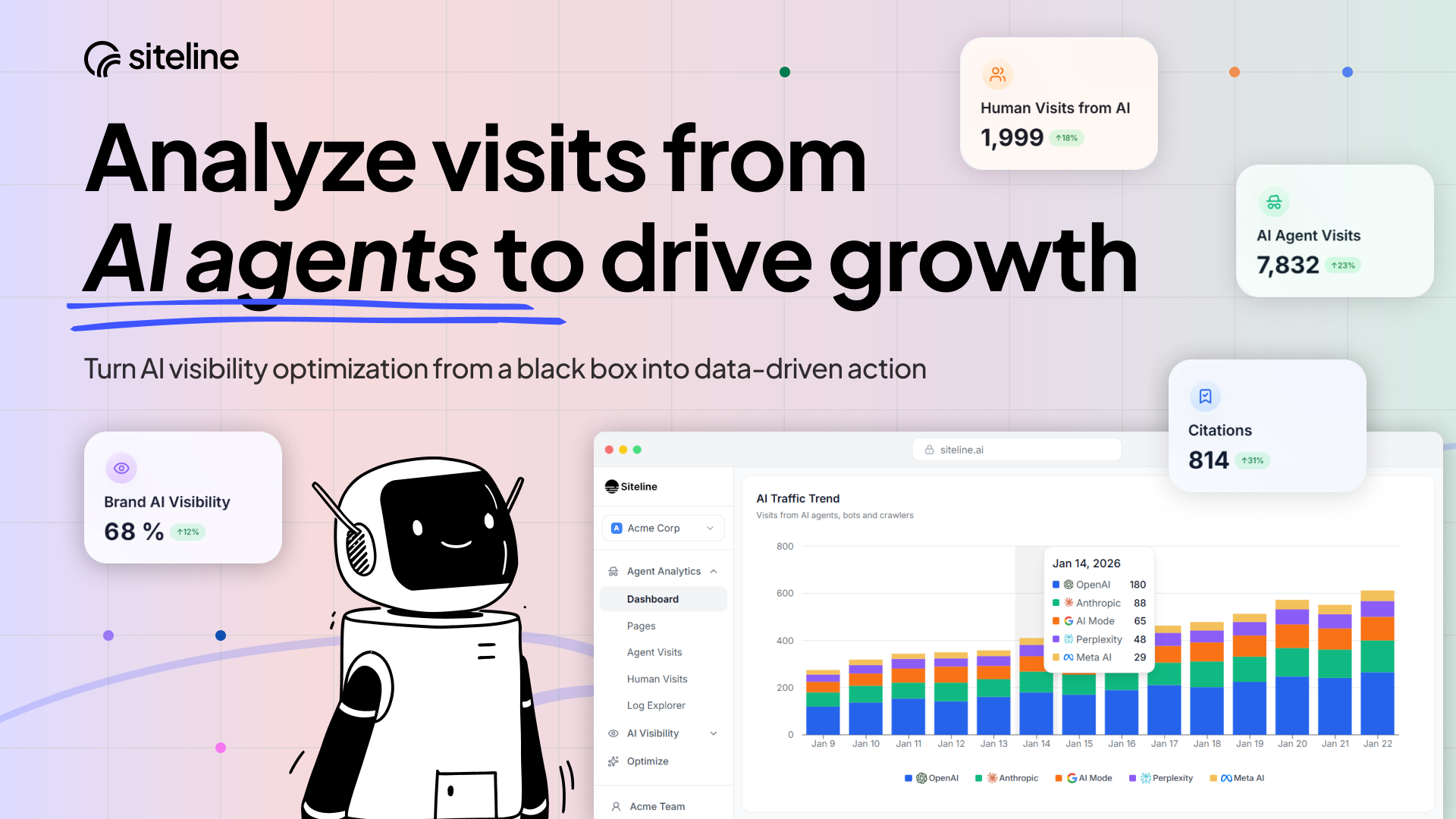

The pitch on Siteline is clean: think Google Analytics, but built for the reality that a significant and growing portion of your site’s visitors are AI systems, not humans. The team describes it themselves on Reddit as exactly that framing. The interesting product question is what you actually do with that information.

Here’s what the product does. You install it on your site (they say first insights in minutes, which is a promise a lot of analytics tools make and usually approximately deliver on). Siteline then tracks how AI agents and bots are interacting with your pages, breaking that down by platform, by page, and by topic. The platform breakdown is the part I find most interesting because it means you can see, say, that ChatGPT is heavily indexing your pricing page while Perplexity is mostly hitting your blog. That’s actionable in a way that “bots visited” is not.

The second layer is the conversion angle. Siteline tracks whether AI traffic correlates with subsequent human visits. The theory being that if an AI agent reads your site today, a human who got that AI’s answer might show up tomorrow. Proving that causal chain at any kind of statistical confidence sounds hard, but even a directional signal would be valuable.

It got solid traction on launch day, which makes sense. This is a problem that’s easy to explain to anyone running a site right now and the frustration with existing tools is real.

The founder angle is worth a quick note. According to Crunchbase, Gloria Lin is co-founder and CEO, Stanford-educated, and previously Stripe’s first product management hire according to a TechCrunch profile. Joel Poloney is co-founder and CTO. That’s a credible technical and product pairing for something that requires both solid instrumentation and a clear point of view on what the data means.

For teams already thinking about how AI systems consume and surface structured data, Siteline is a complementary lens. That one’s about the semantic layer. This is about the traffic layer.

The Verdict

I think this is a real problem and I think the timing is right. The annoying part is that the core value proposition depends on data quality that’s genuinely hard to get. Bot fingerprinting is an arms race. Some AI crawlers identify themselves cleanly. Others don’t. If Siteline’s attribution model relies on accurate agent identification and that identification is inconsistent across platforms, the insights could be directionally interesting but noisy enough to be frustrating.

What I’d want to know at 30 days: how accurate is the platform-level breakdown in practice, and what’s the methodology. At 60 days: are teams actually changing their content strategy based on the data, or is this a tab people open once and feel smart about. At 90 days: does the human conversion correlation hold up with enough sites to be a real feature, or is it aspirational for now.

The founders have the background to build this right. The use case is real. The risk is that this is a dashboard people find interesting once and don’t return to, rather than something sticky that embeds into a weekly workflow. If they can make the AI-to-human conversion signal reliable and repeatable, that’s the thing that makes this a tool you open every Monday instead of every quarter.

Also featured on HUGE: Cube Wants to Be the Semantic Layer AI Actually Uses, Not Just the One You Deploy and Forget · You Think You Know Your Web Traffic. You Don’t. · The SEO Spider That Lives on Your Machine, Not Someone Else’s Server