The Macro: Developer Tools Got the AI Treatment, and Most of It Is Noise

API testing is one of those things everyone knows they should do better and almost nobody actually does. Not because engineers are lazy. Because writing test scripts is tedious, maintaining them is worse, and the tooling has historically demanded either a GUI you don’t want running in CI or a scripting setup that takes longer to configure than the API itself took to build.

Here’s the thing about the current moment in developer tooling: we’re drowning in AI wrappers. Everything is an AI-powered version of something that worked fine before. Most of it is surface-level. A GPT call duct-taped to a UI that would have been considered mid in 2019.

But underneath that noise, there’s a real and specific problem getting solved in pockets. The actual interesting work is happening in the CLI layer, where developers live anyway, and where AI assistance has the most room to reduce genuinely annoying friction. Cline already pushed into this space hard, betting that AI agents belong inside your pipeline rather than on top of it. That instinct is right.

API testing specifically has a clear before-and-after. Before: Postman for GUI folks, custom pytest or Jest setups for teams that care, and a lot of endpoints that just go untested because nobody had time. After, maybe: describe what you want to verify in plain English and let something else generate the scaffolding. The question is always whether the AI actually understands your API well enough to write useful tests, or whether it just confidently produces plausible-looking garbage.

That’s the bet Octrafic is making. And it’s not a crazy one.

The Micro: One Binary, No Test Scripts, Surprisingly Coherent

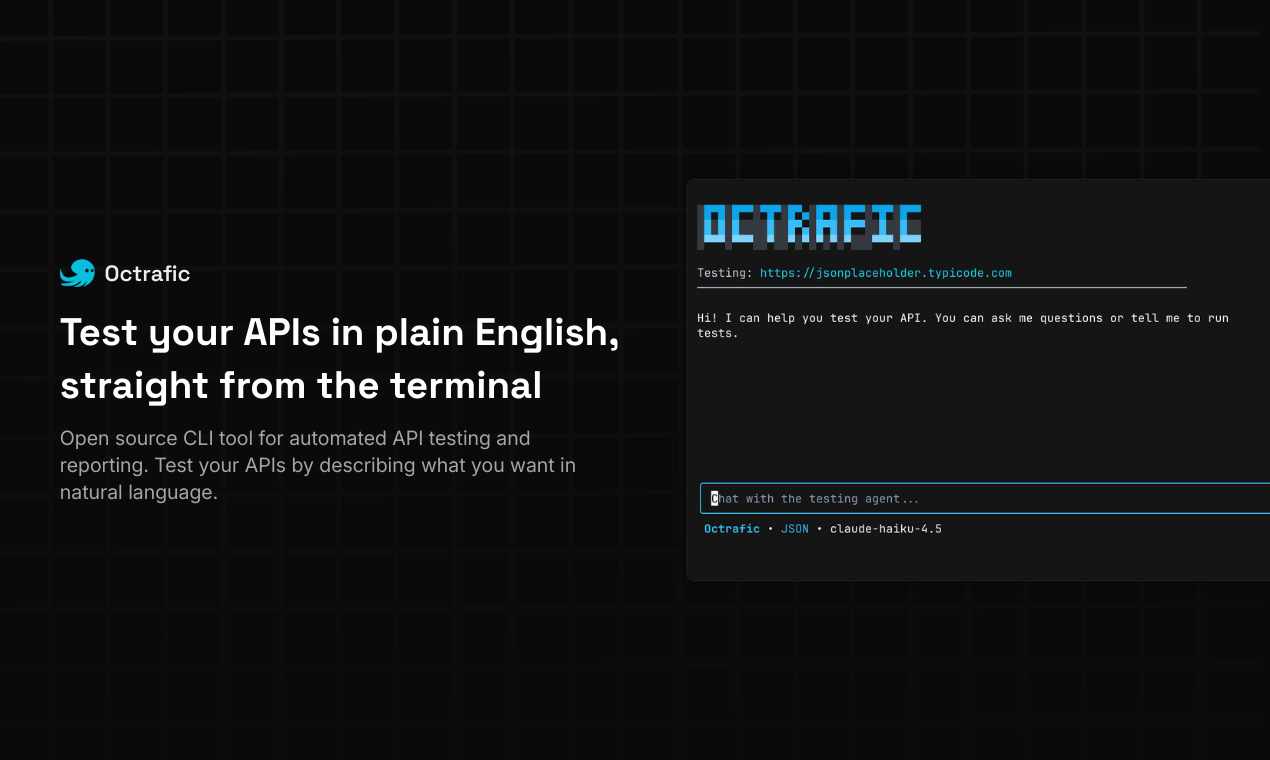

Octrafic is a CLI tool. Open source. Single binary. You point it at an OpenAPI spec or a live endpoint, describe what you want to test in plain English, and it generates requests, validates the responses, and spits out a PDF report. That’s the pitch, and it’s pretty clean.

No GUI. No mocks. No test framework to install and configure. According to the product description, it works with OpenAI, Claude, Ollama, and any OpenAI-compatible provider, which means you can run it locally if you’re sensitive about sending your API specs to a third party. That’s a meaningful design choice. A lot of teams will not pipe internal API schemas to an external model. Supporting Ollama is the difference between a toy and something an enterprise security team will actually consider.

The plain English input is the core bet. Instead of writing a test file that says “send a POST to /users with these headers and assert the response is 201,” you say something like “make sure creating a user with a missing email returns a 400” and Octrafic works out the mechanics. Whether that works reliably across weird edge cases and poorly documented APIs is the real question, and I don’t have enough hands-on time with it to tell you definitively.

It did solid numbers on launch day, which at minimum means the developer community noticed.

What I find interesting is the PDF export. That’s not a developer-for-developers feature. That’s a feature for showing someone else, a PM, a client, an auditor, that you ran tests. It signals that the team is thinking about Octrafic as part of a workflow, not just a clever hack. The same instinct showed up in how Surfpool approached tooling for Solana developers, where the interesting product decisions were about fit into an existing professional context, not just raw capability.

The open source angle also matters here. Developers will actually look at what it’s doing before they trust it with a real API.

The Verdict

I’ll be direct: Octrafic is probably overhyped in the sense that the “plain English API testing” framing sounds easier than it will be in practice. Getting an LLM to generate correct, useful tests against a well-documented API with a clean spec is tractable. Getting it to do useful work against a messy internal service with half-documented endpoints and inconsistent response shapes is a different problem entirely.

But here’s the thing. The single binary, no-framework, Ollama-compatible angle is genuinely good product thinking. This isn’t trying to replace a full QA pipeline. It’s trying to give one developer a fast way to verify something isn’t obviously broken, without spinning up a whole testing infrastructure. That’s a real use case. A lot of small teams would pay for that if it works.

At 30 days I’d want to know how it handles undocumented or partially documented endpoints. At 60 days I’d want to see whether the PDF reports are actually showing up in real workflows or just getting generated and ignored. At 90 days, the question is whether the open source community starts contributing edge case handling, because that’s what will determine whether this stays useful or slowly drifts into demo territory.

The developer tools space has room for this. I just want to see it survive contact with a genuinely messy API before I call it a win.