The Macro: Everyone Has an AI Coding Agent. Nobody Has a Way to Wrangle Them.

Something shifted in the last eighteen months. It stopped being impressive that you could ask an AI to write a function. Now the expectation is that the AI should just go handle the whole ticket while you work on something else. Claude Code, OpenAI’s Codex, Cursor, Cline (which we’ve covered at length here). The agent tooling got genuinely good, faster than most people expected.

But here’s the thing nobody really solved: what do you do with five of them running at once?

The mental model most developers are still using is one agent, one task, babysit it until it finishes. That’s fine. It’s also basically the same as just doing the work yourself, only slower and with more clipboard activity. The interesting unlock isn’t the agent. It’s parallelism. And parallelism requires orchestration.

The AI productivity tools market was valued at around $8.8 billion in 2024, with projections pointing toward $36 billion by 2033, according to Grand View Research. That’s a lot of money chasing a space where, honestly, the UX layer hasn’t kept up with the capability layer. The agents got smarter. The interfaces for managing them are still mostly “one terminal window, good luck.”

The companies positioned here are a mixed bag. Some are building the agents themselves. Some, like Base44, are abstracting away the infrastructure underneath. Superset is doing something more specific: it’s not trying to be the agent. It’s trying to be the thing you use to run a bunch of agents without having a breakdown.

That’s a real gap. Whether it’s a startup-sized gap is the question.

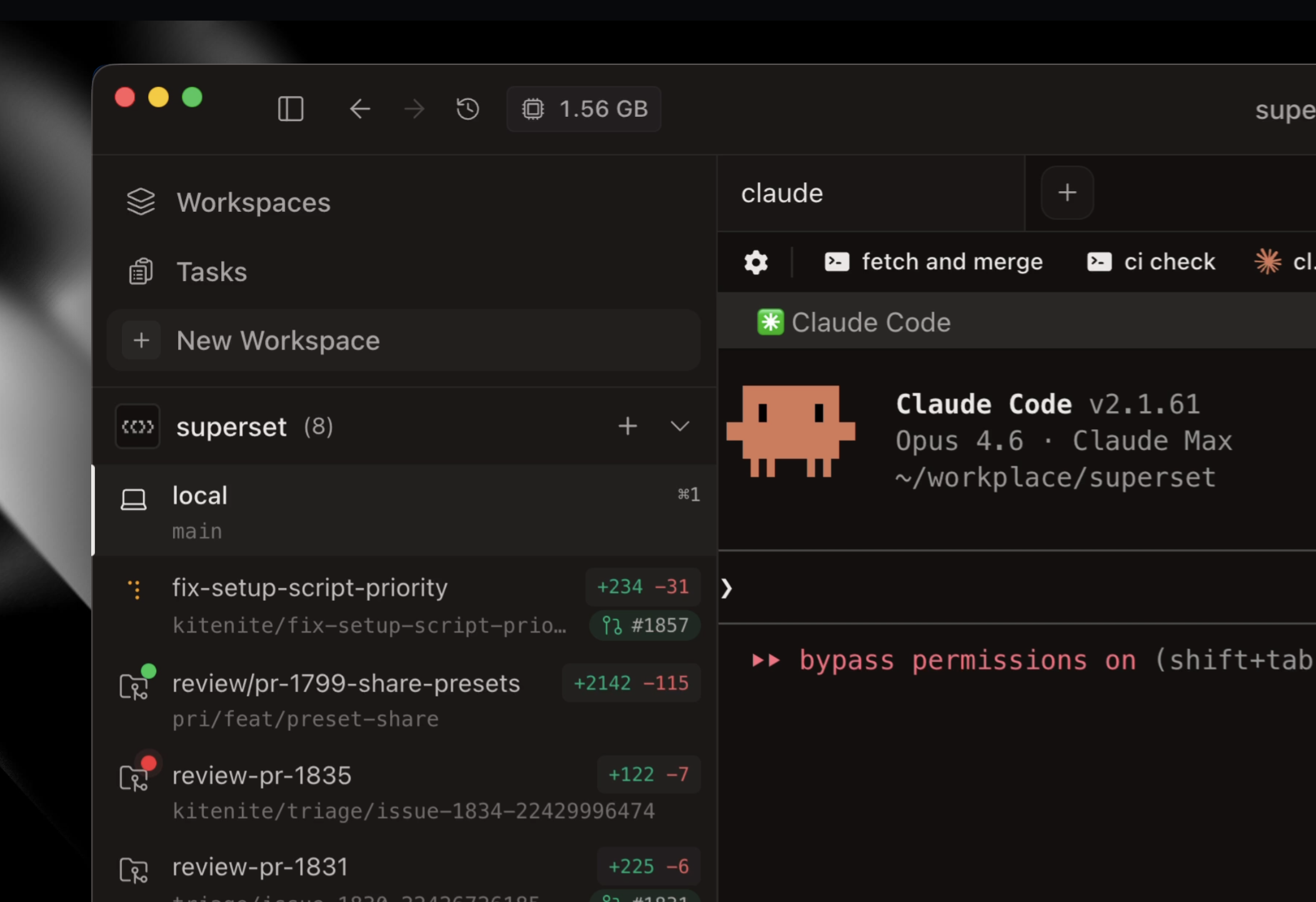

The Micro: One Dashboard, Many Agents, Theoretically No Chaos

Superset describes itself as a “turbocharged IDE” and the pitch is pretty direct. You spin up multiple coding agents, each one isolated in its own sandbox so they’re not stepping on each other’s work, and you monitor all of them from a single interface. When one needs input, you get notified. When it’s done, you review the diff and move on.

The sandbox isolation piece is the part I find most technically interesting. One of the real failure modes of multi-agent setups right now is agents writing to the same files, or making conflicting assumptions about state. If Superset is actually enforcing clean separation between agent workspaces (and the GitHub star count on the project, nearly 2,900 according to one aggregator, suggests developers think the approach has merit), that’s solving a real problem and not just a vibes problem.

The built-in diff viewer and editor matters too. Right now the review workflow for AI-generated code is kind of a mess. You context-switch into your IDE, you squint at what changed, you try to remember what you asked the agent to do. Having that review step native to the tool that ran the agent is a small UX decision that will actually save people time in practice.

I’ll be honest that the product website wasn’t available when I was writing this, so I’m working from the product description and what’s surfaced through the community. It did well when it launched, which tracks given the problem it’s targeting is one developers are actively complaining about. A Hacker News thread on a related Codex tool surfaced at least one user saying they’re already using Superset as their primary terminal-adjacent workflow. That’s a signal.

The memory and context management issues that plague single-agent setups (something Claudebin tried to address from a different angle) get more complicated when you’re running many agents. I’d want to understand how Superset handles that specifically before calling the architecture fully baked.

Founder Taylor Pemberton comes from a design and product background, which explains why the positioning is clean. The pitch doesn’t oversell the AI. It sells the workflow.

The Verdict

I actually think this is a real product solving a real problem, which I don’t say about every tool that comes through here.

The bet Superset is making is that developers will move from “I use one AI agent sometimes” to “I run several agents in parallel as a core part of how I work.” That transition is happening. I’ve seen it in how people talk about their setups on forums, in how the agent tools themselves are evolving. The behavior is real.

The risk is timing and depth. If you’re too early on the workflow shift, you build for a power-user audience that’s too small to sustain you. If the major IDEs like Cursor or VS Code decide to solve the multi-agent orchestration problem natively, the dedicated tool loses its reason to exist fast.

At 30 days I’d want to know if developers are actually running this in production workflows or just experimenting. At 60 days I’d want to know if the sandbox isolation is holding up in real messy codebases and not just clean demos. At 90 days, retention tells you everything.

The thing that makes me think this has a real shot is that the complexity of managing agents is only going up. Someone has to build the control plane. Superset is trying to be that.

Also featured on HUGE: PinMe Wants You to Deploy a Website Before You Even Remember to Make an Account · Base44 Wants to Be the Backend You Never Have to Think About Again · Cline Wants to Be the AI Agent That Actually Lives in Your Pipeline