The Macro: The Hidden Tax of Building With AI Agents

Here’s something the AI developer tooling hype cycle doesn’t talk about enough: the maintenance problem.

Everyone is sprinting to build with Claude Code, Cline, and whatever the next agentic framework drops next week. The tooling to scaffold new things is everywhere. The tooling to clean up what you’ve already built? Almost nonexistent. And the mess accumulates fast.

When you’re working with an AI coding agent at any real depth, you end up with a pile of custom slash commands and skills that reflect every phase of how you thought about a problem. Early iteration is messy by design. The issue is that nobody comes back to clean it up. You end up with overlapping commands doing roughly the same thing, broken references nobody noticed, and skills you defined in week one that haven’t been touched since. The agent just quietly works around them, or worse, uses them inconsistently.

This is not a niche problem. The open source developer tooling space is genuinely expanding, with multiple research sources pointing to the broader open source software market growing at CAGRs north of 16% through the mid-2030s. More developers building more things with more AI scaffolding means more technical debt, faster. The surface area for this kind of tooling problem is only getting larger.

The gap isn’t in new capability. It’s in observability and hygiene for the stuff you’ve already built. Tools like ByteRover’s approach to agent memory are tackling adjacent parts of this: what does your agent actually know, and where does it live? Skills Janitor is asking a related but distinct question: what have you told your agent to do, and is any of it actually working?

The auditing angle is underappreciated. Most developer tools in the AI agent space optimize for creation. Skills Janitor optimizes for reflection. That’s a different instinct, and honestly a rarer one.

The Micro: Nine Commands, Zero Drama, One Very Specific Job

Skills Janitor does exactly what it says. Nine slash commands. According to its listing in the awesome-claude-code-toolkit repository, it has zero dependencies. That detail matters more than it sounds.

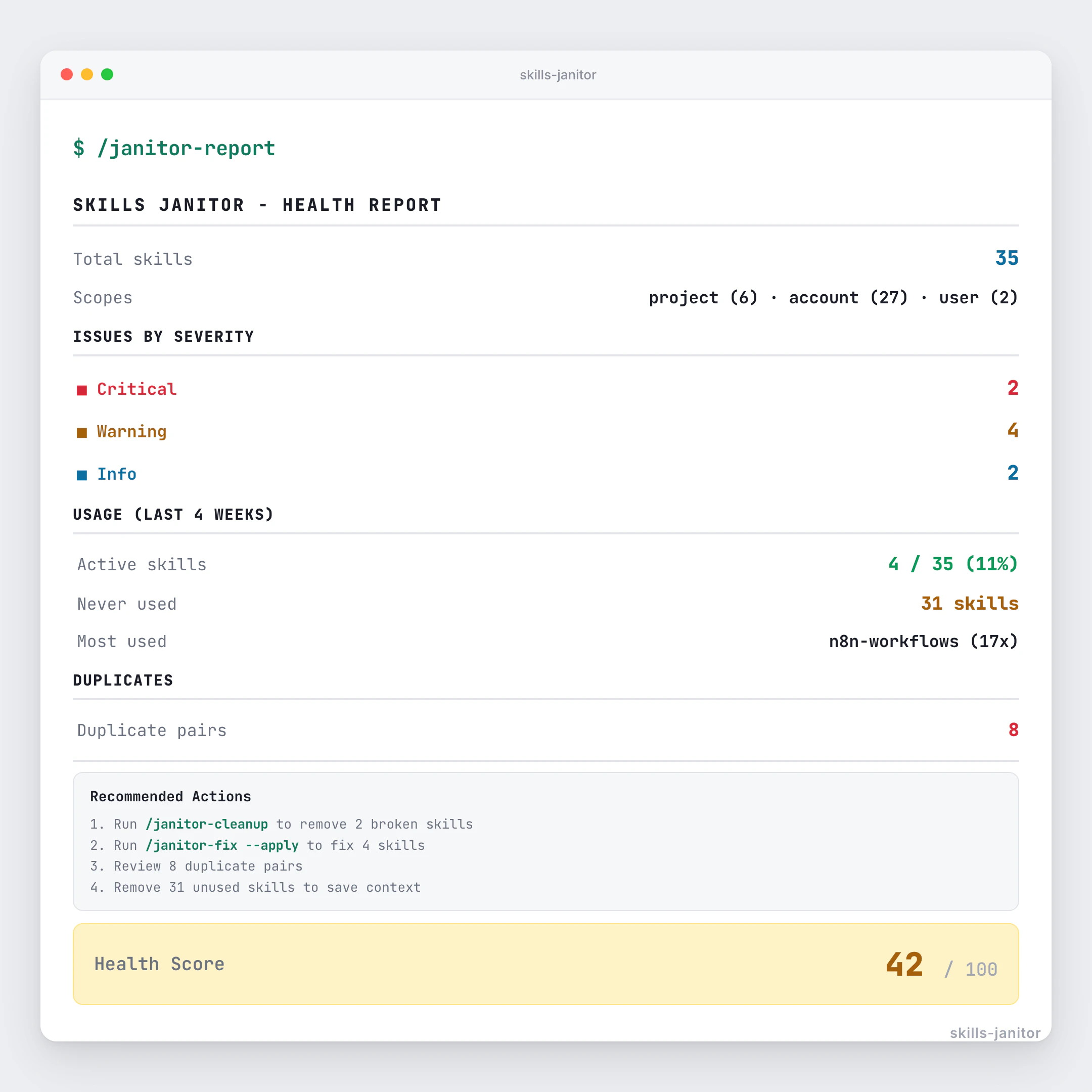

The core feature set covers auditing your Claude Code skills for what’s broken, deduplication to catch overlapping commands, linting, fixing, and usage tracking. That last one is the sleeper feature. Usage tracking tells you which skills you’ve actually been reaching for and which ones are just taking up space. It’s the kind of signal that’s obvious in retrospect but almost nobody builds first.

The smartest decision here is scope. This tool does not try to be a full Claude Code management platform. It is specifically, deliberately a janitor. The name is not ironic. It cleans up after you. That restraint is a product decision, and it’s the right one. The more I look at AI developer tools that tried to be everything, the more I respect the ones that picked a lane. Cline’s focus on living inside the pipeline rather than replacing it is the same instinct.

The riskiest bet is the audience size. Claude Code has real adoption, and it shows up in repos like cultofclaude.com’s skills directory, where Skills Janitor is already listed. But this tool has no utility if you’re not already deep enough into Claude Code to have accumulated skill debt. That’s a narrower slice than the general “AI developer tools” label implies.

It’s free and open source, which eliminates the conversion problem entirely. There’s no paywall friction here.

It got solid traction on launch day and landed at #4 for the day, which suggests the people who needed it recognized it immediately.

If I were building this, I’d think hard about a visual diff output for the duplicate detection step. Right now I’m inferring from the description that output is CLI-based. A quick summary report you could paste into a PR description would make the linting and deduplication results a lot more shareable.

The Verdict: Nail Gun for a Real Problem, Tiny But Loyal Market Ahead

I’ll say it directly: this tool is good. Not in a hype way. In a “this solves a real problem I have personally experienced” way.

The concern isn’t quality. It’s ceiling. Skills Janitor is useful to exactly the people who are already using Claude Code heavily enough to have made a mess. That population is real and growing, but it’s not enormous today. The tool is betting that the Claude Code power user base scales up fast enough to make a maintenance-focused utility worth maintaining.

I think that bet is probably right, just on a longer timeline than the launch energy suggests. The pattern I’ve seen in developer tooling is that the cleanup tools always lag the creation tools by 12 to 18 months, and then become quietly essential. The same dynamic played out in how AI agents handle context and output, where the scaffolding came first and the polish tools followed.

The zero-dependency, open source approach is the right call for a tool at this stage. It removes every possible reason not to try it.

My prediction: Skills Janitor doesn’t become a company, it becomes a standard. The commands get forked, adapted, pulled into other Claude Code management workflows, and the original repo quietly accumulates stars from developers who found it right when they needed it. That’s not a failure mode. For an open source utility with this kind of specific utility, that’s actually the win condition.