The Macro: Everyone Built a Chatbot, Nobody Built a Coworker

Here’s the thing about the current wave of AI productivity tools: most of them are still just very fast search boxes with good PR. You ask, they answer. You copy, you paste, you do the actual work yourself. The AI sits there looking helpful while you remain the one holding the bag.

The market is enormous. Business productivity software is projected to grow from around $62.5 billion in 2024 to somewhere north of $140 billion within the next few years, according to multiple market research sources. That’s a lot of runway for a lot of products, and predictably, we’ve gotten a lot of products. Most of them are assistants in the truest (most limiting) sense of the word. They assist. They don’t act.

The more interesting product question, and the one a handful of teams are actually trying to answer, is what happens when you give the AI more surface area. Not just a chat window but actual access to your tools, your calendar, your codebase, your ad accounts. Not a co-pilot but something closer to an autonomous operator. Kimi Claw went in a similar direction with a lot of fanfare earlier this year, and the reception suggested people are genuinely hungry for AI that ships things rather than suggests things.

The honest complication is that “agentic AI” is now a phrase that means almost nothing because everyone uses it. Competitors like 11.ai are in the same general zip code as Viktor on paper. Claude Cowork exists. Tensol is being compared to Viktor directly in software directories. The category is real but the differentiation is murky, and the products that survive will be the ones that actually run without a babysitter.

That’s the bet Viktor is making.

The Micro: It Lives in Slack and It Does Not Wait to Be Asked

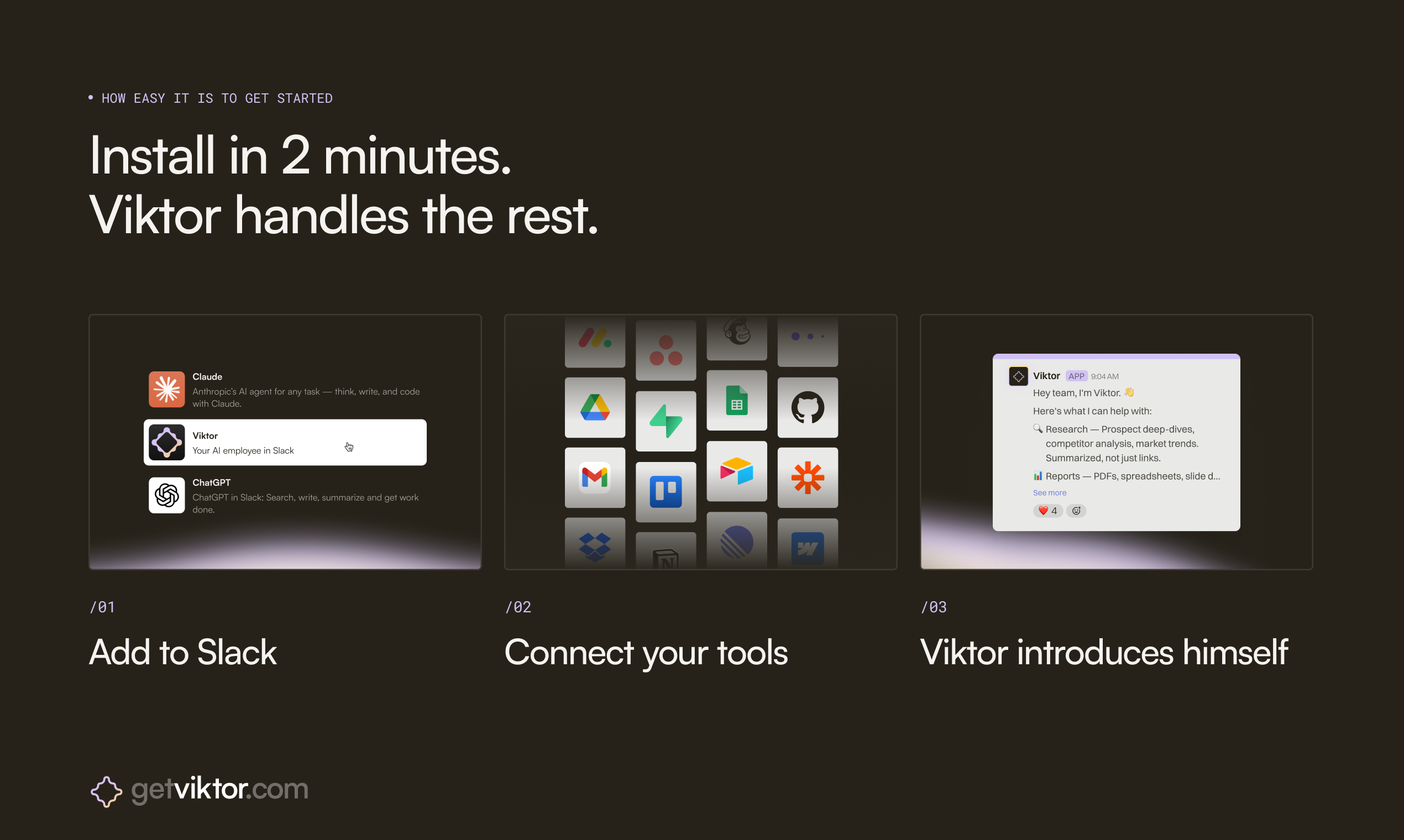

Viktor is, at its core, a persistent AI agent that runs inside Slack or Microsoft Teams and connects to over 3,000 external tools. That integration count covers the usual suspects: Stripe, Linear, Notion, Google Ads, and a long tail of whatever else your stack looks like. The pitch is not “ask Viktor to do something.” The pitch is “Viktor notices things and does them before you think to ask.”

That’s the part worth sitting with for a second.

Most AI tools are reactive. You prompt, they respond. Viktor’s positioning is explicitly proactive. According to the product site, it watches how your team works, spots problems, and proposes automations based on your actual workflows. The example they show in the UI mockup is Viktor noticing that design is behind on a landing page during a launch push and pinging the right person without being told to. That’s a meaningfully different product interaction than anything in the average AI chatbot demo.

The weeks-long context retention is the technical claim I find most interesting (and most skeptical about, frankly). The pitch is that Viktor runs for weeks without losing the thread of what it’s working on and learns your company progressively. If that’s real and not marketing copy, it solves one of the genuinely annoying limitations of current agents, which tend to be stateless and forgetful. Superset ran into exactly this kind of context management problem when building coordination tooling for AI coding agents, so the challenge is well-documented.

The product got solid traction when it launched, which tracks given how squarely it sits in the “AI that actually does stuff” conversation right now.

Founder Fryderyk Wiatrowski has an Oxford math background according to his LinkedIn, and both he and co-founder Peter Albert previously worked on Jace.ai, an AI email product. So this team has at least one prior rep building AI agents in a productivity context, which is worth something.

Viktor starts free with $100 in credits and is SOC2 compliant, which matters the moment any enterprise buyer gets involved.

The Verdict

I think Viktor is solving the right problem. The gap between “AI that answers” and “AI that executes” is real and large, and the Slack-native approach is smart because it meets teams where they already coordinate.

What I’d want to know at 30 days: does the proactive detection actually work, or is it mostly a demo-mode feature that requires a lot of setup to produce anything useful? Proactive AI sounds great until it’s pinging the wrong person about the wrong thing at 11pm.

At 60 days: how does it handle mistakes? An AI that acts autonomously is also an AI that can autonomously do the wrong thing. The error recovery model matters as much as the happy path.

At 90 days: retention. Agents that run for weeks sound compelling until users realize they’ve lost visibility into what the agent is doing. Tools like Voicr are figuring out how to build AI workflows people actually trust long-term, and trust is the whole game here.

The “your last hire” framing is bold. It’s also the kind of framing that ages badly if the product doesn’t deliver. I’m watching this one closely, but I’m not calling it a sure thing yet.

Also featured on HUGE: TamLabs Thinks AI Can Redesign Knowledge Work and They’re Starting with Professional Services · Vercel Wants to Own the Whole Stack, Not Just the Edge · OpenAI’s New Codex Helped Build Itself. That’s Either Impressive or a Warning Label.